Embracing Linear Regression: A Newbie’s Guide to Data Analysis

Embark with us on a journey to discover the significance of linear regression in data analysis. If you've ever wondered about its definition and purpose, keep reading. Linear regression is a straightforward approach used in portraying data. In simple terms, it is a predictive modeling technique used to depict the relationship between a dependent variable and one or more independent variables. Moreover, it's not just a random process - it's rooted in firm statistical assumptions and powerful prediction capabilities. Its simplicity and effectiveness make it a valuable tool in many fields, including, but not limited to, economics, healthcare, and technology. From enhancing prediction accuracy, understanding the relationship between variables, to aiding critical business decisions, linear regression holds immense relevance. As we delve deeper, we will explore various types of linear regression models like simple and multiple linear regressions. Gradually unpacking key terms, we'll dive into understanding coefficients, residuals, and R-squared, vital in the realm of regression. We have much ground to cover, so let's embrace linear regression together, eagerly waiting to introduce you to powerful techniques for enhancing your data analysis skills. Stay tuned as we lay the groundwork for understanding and implementing this fundamental statistical method.

Understanding the Basics of Regression Analysis

At the root of regression analysis lies the concept of isolating relationships between variables. This process entails the examination of the influence of one or more independent variables on a dependent variable. A cornerstone of predictive analytics, regression analysis has an impressive capability in estimating unknown effects and deploying reliable forecasting models.

Delving deeper, the fundamentals of regression analysis establish certain statistical assumptions. The user must assume a level of independence amongst the predictors, ensuring there is minimal multicollinearity. Moreover, the dependent variable should typically exhibit a linear relationship with the independent variables, and the residuals or 'prediction errors' should be normally distributed. These assumptions are not arbitrary but form the core criterion that validates the application of regression analysis. For instance, understanding the weather's impact on crop yield, a farmer can gather data on temperature (independent variable) and crop yield (dependent variable) over a period of time. Through regression analysis, they can chart how temperature variances affect the yield, providing valuable insights for planning and forecasting. Hence, ensuring adherence to these assumptions results in a robust, efficient, and practical predictive model.

What is Linear Regression?

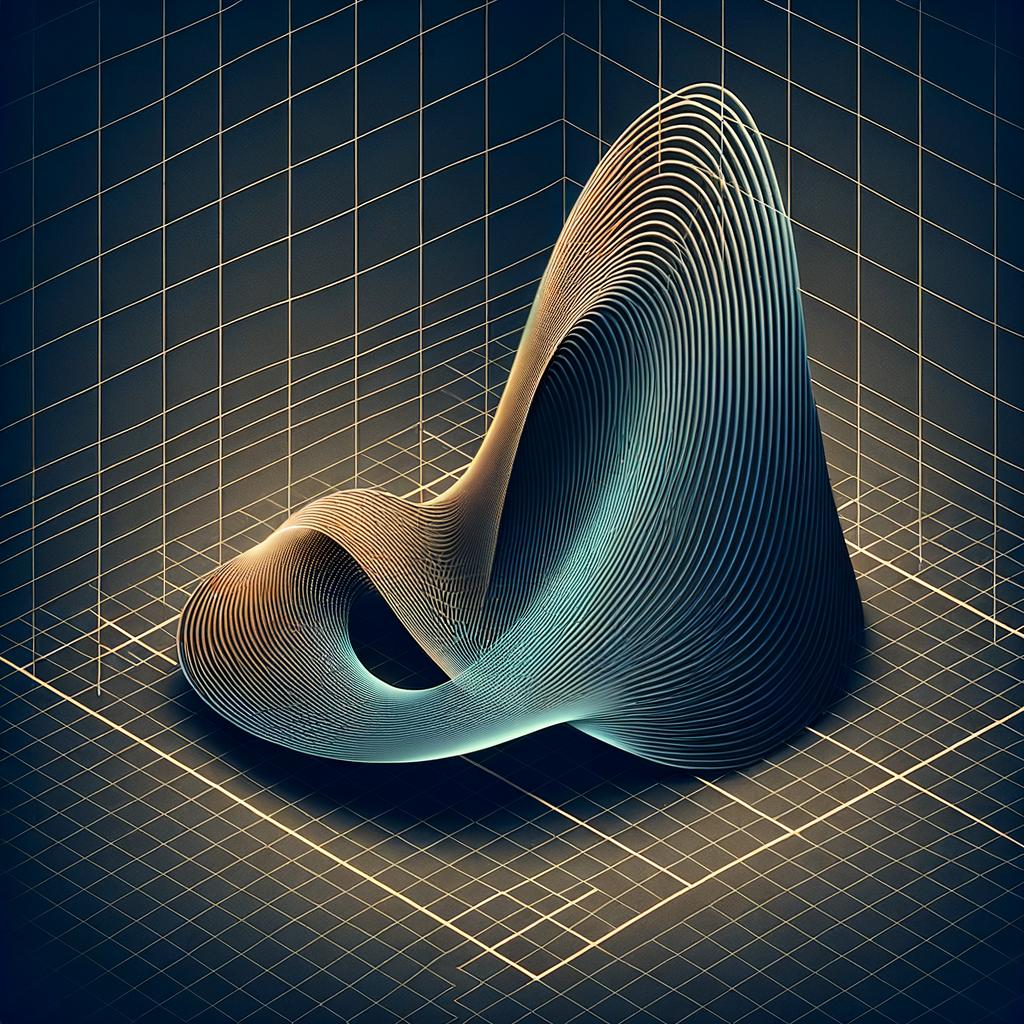

Linear Regression is a statistical method that enables us to summarize and study the relationships between two continuous (quantitative) variables: one variable, denoted x, is regarded as the predictor, explanatory, or independent variable; and the other, denoted y, as the response, outcome, or dependent variable. Notably, linear regression uses the relationship between the data-points to draw a straight line through all them. This line can be used to predict future values. As a newbie, understanding this procedure will greatly enhance your data analysis skills.

On a technical level, linear regression applies a mathematical filter to predict a continuous outcome variable - 'y', using one or more predictor variables - 'x'. It operates under the assumption that there's a straight-line relationship between 'x' and 'y' and attempts to draw a line that fits this pattern. The best fit line is the line where total prediction error (all residuals) are as small as possible. This is essentially the mathematical formulation of linear regression.

The simplicity of linear regression and its efficient applicability make it one of the go-to methods for data analysis. Its purpose is generally interpretative - it helps us understand the prediction capabilities through a number and significance of its coefficients. For instance, in finance, it can be used to predict future stock prices based on past performance or in healthcare, to predict a person's risk of developing a certain disease based on certain parameters such as age, weight, and family history.

In summary, when we talk about linear regression, we're talking about a highly adaptable and interpretable method that allows us to predict an outcome based on relevant predictors. Even if it sounds intricate now, with time and understanding, one can easily appreciate the adaptability and effectiveness of linear regression in data analysis.

The Importance of Linear Regression in Data Analysis

Linear regression plays an integral role in data analysis by enhancing prediction, facilitating the understanding of variable relationships, and aiding in informed business decision making. By defining the correlation between two variables, linear regression can predict the expected result of one variable based on the known outcome of the other. For instance, a company might use linear regression to forecast sales based on advertising spend. This correlation isn't merely predictive; it can also highlight relationships between variables. For example, in a healthcare context, linear regression might expose a correlation between patient age and medication effectiveness, aiding medical practitioners in refining treatment plans.

Furthermore, linear regression is indispensable for guiding critical business decisions. By tuning into the correlations and outcomes provided by linear regression, management can plot future market strategies, adjust operations, or plan investments based on anticipated returns. Imagine a real estate company using linear regression to understand the relationship between location factors and property prices. The insights obtained could dramatically inform their buying and selling decisions, ultimately optimizing profitability. In these ways, linear regression serves as a framework for both simplistic and complex data interpretations, making the method invaluable in today's data-driven world.

Types of Linear Regression Models

Linear regression modeling can come in two fundamental forms depending on the characteristics of your data set: simple and multiple linear regression. Simple linear regression, as the name suggests, involves one independent variable and relies on a straightforward, direct relationship with the dependent variable. For a real-world example, suppose a team of analysts wants to study the relationship between the temperature outside and ice cream sales. In this case, you would likely glean insights from simple linear regression, as you're examining the correlation between only two variables.

However, life is more complicated, and often, our questions are too. That's where multiple linear regression steps in. Multiple linear regression extends the concept of simple linear regression to include two or more independent variables. This allows us to explore complex relationships and interactions between our variables. For example, if our team of analysts wishes to extend their inquiry into ice cream sales not only by temperature but also by day of the week, they would use multiple linear regression to analyze the relationship between these variables.

Though they operate under similar principles, the main distinction between simple and multiple linear regression lies in the number of independent variables involved. The added complexity in multiple linear regression can lead to more nuanced and accurate predictions, but it can also invite a greater risk of overfitting and multicollinearity. However, with a thorough understanding of the concepts and careful data analysis, multiple linear regression can powerfully illustrate the impact of various factors on a single outcome.

Each form, simple and multiple linear regression, possesses unique strengths and potential pitfalls. Therefore, it's essential to understand the differences between them, including when to appropriately use one over the other. By understanding and leveraging these two types of linear regression, you can enhance your data exploration and construct stronger, evidence-based arguments in your analyses.

The Concept of Dependent and Independent Variables

To fully comprehend linear regression, it's crucial to understand the roles of dependent and independent variables within this data analysis tool. In simple terms, dependent variables are the outcomes we seek to predict or explain, while independent variables are the inputs we tweak to influence these outcomes. For example, in the business landscape, a company’s profit (dependent variable) could be influenced by multiple factors such as marketing spend, price of goods, and market competition (independent variables).

Analogously, consider a real estate scenario. A house’s selling price (dependent variable) could be determined by factors like its location, age, and size (independent variables). Accurate identification of these variables is key to leveraging linear regression for optimized prediction and decision-making.

In healthcare, the relationship between patient age (independent variable) and risk of a certain disease (dependent variable) could be analyzed using linear regression. Understanding these relations can help experts in the prevention and treatment of those diseases.

Furthermore, in climatology, the measure of global temperature increases (dependent variable) might be related to the rise in greenhouse gas emissions (independent variable). Clearly defining these variables can provide a factual foundation for policy debates on climate change.

This understanding of dependent and independent variables enables researchers and analysts to construct appropriate models for predictive purposes and to respond effectively to changes in the data. The correct identification and interpretation of these variables often sets the stage for the successful application of linear regression in various fields.

Key Terms Used in Linear Regression

In the realm of linear regression, there are several key terms that play a pivotal role. Firstly, a coefficient, otherwise known as a beta coefficient, is the steepness or gradient of the line best fit through the data set. Essentially, it shows the effect that a one-unit increase in an independent variable will have on the dependent variable. An example might be a study to determine how much additional ice cream sales will be spurred by a one-degree rise in temperature. Next, residuals, the difference between the observed and predicted values, are crucial in verifying the accuracy of a model. A small residual is desirable as it shows that the model is a good fit for the observed data. Another term central to linear regression is R-squared, or the coefficient of determination. This statistical measure reflects the proportion of variance in the dependent variable that can be explained by the independent variable(s). In a sales forecasting model, for instance, an R-squared value of .70 would mean 70% of the variation in sales is accounted for by the independent variable(s). Expanding on the topic, several other terms are routinely used in linear regression, including intercepts, linearity, normality, and multicollinearity, all of which contribute to the robustness of the output.

Step-By-Step Guide to Implementing Linear Regression

Start your journey into linear regression with the first step, data collection. Gather both quality and quantity data, ensuring to incorporate variables applicable to your objective. Remember, having a comprehensive dataset lays the foundation for a successful regression model. A real-world example might involve collecting data on house prices and their attributes, such as the number of rooms, square footage, and neighborhood crime rates. By sourcing varied types of pertinent data, you pave the way for accurate predictions.

Next in line is model creation. Here, construct the model using the previously collected data. Calculate your regression model's coefficients using techniques such as ordinary least squares (OLS). These coefficients represent relationships between independent variables and the response variable. Consider a beer sales prediction model: the coefficient of ‘average daily temperature’ helps interpret how much beer sales increase when the temperature increases by 1 degree of Celsius.

Proceed to make predictions based on the model. But remind yourself that the purpose of model predictions is not to accurately forecast exact values, but to understand the relationship between variables. In case of our beer sales model, we don’t need the exact sales number for tomorrow but the understanding that sales tend to increase with the temperature increasing.

Your model evaluation comes in two forms: statistical significance tests and goodness-of-fit measures. Both perform the indispensable job of validating how well the prepared model fits the data. Therefore, your evaluation should ensure the model's ability to generate reliable, effective results.

Great! Your first linear regression model is set. Now, continue to experiment by adjusting the inputs and examining how these changes affect your results. Operations like these offer vital learning experiences that enhance your understanding of regression and fine-tune your modeling skills.

Lastly, don’t forget to spot-check your model against new, unseen data. This step verifies your model’s reliability and its scope of applicability. For instance, you could test a model predicting the sales of a newly introduced product by tallying the predictions against actual sales figures.

Finally, continue to hone your linear regression implementation by iterating on these steps and incorporating feedback from your evaluation and testing phases. Be sure to educate yourself along the way to develop a comprehensive understanding of linear regression and its many applications.

Data Collection: The First Step in Linear Regression

In the realm of linear regression, the first critical step lies in gathering the data for analysis. The quality, as well as the quantity, of the data is instrumental in shaping the foundation of your analysis. One can't simply overlook the importance of gathering substantive and relevant data sets; it's akin to setting the appropriate groundwork before building the structure of a house. Remember that in some scenarios, having more data won't necessarily reflect the accurate relationship between variables; the key is to focus on the quality and aptness of the data needed for your analysis.

It's also vital to comprehend the variety of data types at one's disposal during data collection. Various types of information, such as nominal, ordinal, interval, and ratio data, are often involved in data analysis. Understanding these types and their uses significantly impacts your ability to gather pertinent data. Notably, ensuring that your data is suitable for linear regression is crucial to avoid misinterpretation.

Sourcing the data is another essential aspect of data collection in linear regression. Collecting data is one thing, but determining where to gather them requires added attention. This aspect entails deciding between primary and secondary data sources. Primary data is typically collected firsthand for your analysis, while secondary data is typically derived from existing sources like databases, reports, or studies. The decision on sourcing hinges on factors like the nature of your study, time constraints, and availability of resources.

It's important to remember that data collection, as fundamental as it is, also presents its fair share of complexities. It is essential to adopt a meticulous approach when dealing with every step, from determining the quality, amount, and type of data needed to deciding where and how to aggregate it. It is also crucial to continually verify the data throughout this process to prevent inaccuracies and data errors.

In sum, the result of a linear regression analysis is heavily influenced by the data collection process. The better the data input, the more reliable and accurate the output, allowing for the establishment of meaningful and valid assumptions between variables. These observations and deductions allow for improved decision-making - be it in fields like business, economics or health - and fortify the contribution of linear regression in data analysis.

Running a Simple Linear Regression: A Practical Example

Let's walk through a practical example of running a simple linear regression. Suppose you're at a pizza restaurant that sells pizzas in three different sizes, and you want to predict the price based on the size of the pizza. This scenario features one independent variable (pizza size) and one dependent variable (price), making it an ideal example for simple linear regression.

Initially, data collection is paramount. You'd need to record the size of the pizza and its corresponding price. Let's say you have data on 20 different pizzas. This data is then plotted on a graph: the horizontal axis representing pizza size and the vertical axis representing the price. With these data points plotted, what's noticeable is a general upward trend, i.e., the bigger the pizza, the higher the price.

Next up is model creation. You use your collected data to generate a straight line that best fits your data points. This involves calculating the slope and intercept of the line to create a mathematical representation, the linear regression equation, which you can use to predict new outputs. For instance, the calculated slope indicates how much the pizza price changes for each change in pizza size.

Finally, it's time to make predictions and evaluate your model. You can now use your linear regression model to predict the price of pizzas based on their size. To assess how well your model predicts prices, one usually calculates the R-squared statistic. If your R-squared value is close to 1, this means that your model can explain a high proportion of the variance in pizza prices based on their size. This indicates a successful simple linear regression model.

Interpreting Linear Regression Results

When it comes to interpreting the results of linear regression, there are a few key components. Firstly, you should understand the coefficients. Each coefficient in a linear regression equation represents the change in the dependent variable due to a change in the corresponding independent variable, while other variables remain constant. For example, in predicting a house's price based on its size and age, the coefficient for size could show that for each increase in square footage, the price increases by $500, assuming the age doesn't change.

Secondly, interpreting the R-squared statistic is essential. This statistic, ranging from 0 to 1, indicates how well the model fits the data. An R-squared of 1 means that the model perfectly predicts the dependent variable, while 0 suggests the model explains none of the variability. For instance, an R-squared of 0.75 in predicting students' GPA based on their study time can imply that 75% of the variation in GPAs is accounted for by the study time. Lastly, residuals, the differences between observed and predicted values, are crucial in recognizing the prediction errors in your model. If these residuals are randomly scattered around zero, your linear regression model is working effectively. If a pattern is found, adjustments may be needed.

Linear Regression Assumptions You Should Know

Links under the section "Linear Regression Assumptions You Should Know" hint at three critical assumptions: linearity, normality, and homoscedasticity. It's crucial to be aware that these are not optional extras in applying linear regression; they're fundamental to the model's proper functioning.

Your data's relationship should be linear - that's the core philosophy behind linear regression. This assumption suggests that a unit increase in the independent variable will either increase or decrease the dependent variable by a constant amount. For instance, in a clothing business, every $10 increase in advertising spend might translate into an extra 20 shirts being sold.

The normality assumption considers the errors in prediction, those differences between the observed and predicted values. In an ideal situation, these errors follow what statisticians refer to as a 'normal distribution' - they form that familiar 'bell curve' shape when plotted on a graph. Imagine a teacher grading a large number of assignments - most will be around the middle grades, with fewer attaining very high or low marks. That's a visual representation of normality.

The final key assumption is homoscedasticity, a more complex term indicating the spread of your residuals or errors. Essential to valid prediction results, this means that no matter what the value of your dependent variable is, the variance (spread) of your residuals remains constant. You can picture a dartboard; even if the player consistently hits low, the distance of each dart throw from the target (the error) should remain constant and not show any distinct pattern.

In essence, embracing linear regression requires getting to grips with these fundamental assumptions that lay the foundation of your data analysis journey.

Common Problems in Linear Regression and Their Solutions

Linear regression, though a powerful tool, can sometimes present problems such as multicollinearity. Multicollinearity arises when two or more predictor variables are highly correlated, leading to misleading coefficient estimates. It can be mitigated using methods such as variable elimination, ridge regression, or using Variance Inflation Factor (VIF) to detect correlated variables.

Another common issue encountered in linear regression is heteroscedasticity. Heteroscedasticity refers to a scenario where residuals (predicted outcome and the actual value's difference) have unequal variances across the regression line. A standard deviation that varies along the line tilts the model's accuracy toward the prediction with lower standard deviations. A visual assessment, executing the Breusch-Pagan or Cook-Weisberg test, or applying either transformations like logarithmic, square root, or inverse transformations are some strategies to handle heteroscedasticity.

Outliers can potentially distort the regression line and thus reduce the predictive accuracy of the model. They can be identified by creating a scatter plot or using tools such as Cook’s Distance and the Leverage Value. Once identified, they can be treated by techniques such as deletion, transformation, or using robust regression methods.

Inadequate model specification is when the model specified does not correctly represent the relationship between the variable of interest and the predictors. A common form of misspecification is incorrectly formulating the model, either by omitting significant variables or adding irrelevant ones. Inclusion of critical interaction effects and polynomial terms can address this.

Sometimes, despite the assumption of normality of the residuals, there might be evidence of skewness or kurtosis. In such cases, Box-Cox transformations can be applied to make the residuals more normal.

Finally, in certain cases, there can be a lack of fit. Here, the non-linearity of the data can be dealt with by incorporating polynomial features or by techniques like piecewise regression.

Knowing these potential issues and solutions can lead to more effective implementation of linear regression in real-life analysis.

Improving Your Linear Regression Model

Enhancing the predictive power of your linear regression model involves several sophisticated techniques. Inclusion of interaction effects in your model allows for understanding of how the relationship between two independent variables might change when a third variable plays a role. To illustrate, consider an e-commerce business trying to predict sales. Customer income and outreach might be two variables assumed to independently correlate with sales. However, the interaction between customer income and marketing outreach may yield additional insight, as a higher-income customer may respond differently to marketing than a lower-income one.

Data transformations and the addition of polynomial terms can also lead to more powerful models. Polynomial regression, an extension of linear regression, allows for nonlinear relationships between independent and dependent variables. This can be vital in scenarios where relationships are not strictly linear, like stock market predictions. Data transformation, on the other hand, may involve strategies like log, square-root or inverse transformations to stabilize variance or simplify complex relationships in the data, thereby improving the accuracy of your predictions.

Advanced Techniques in Linear Regression

Expanding linear regression approaches beyond the standard can be instrumental in addressing complex datasets and refining predictive accuracy. Techniques like Ridge, Lasso, and ElasticNet represent such advanced methods. Ridge regression helps combat the problem of overfitting, a widely encountered issue where the model conforms excessively to the training data, failing with new data. Ridge reduces the complexity of the model by using L2 regularization, essentially decreasing the coefficients but not eliminating them. Similarly, Lasso, or Least Absolute Shrinkage and Selection Operator, uses L1 regularization, driving some coefficients to zero, effectively performing feature selection. This can be especially useful in models burdened with unnecessary features. ElasticNet, a hybrid of Ridge and Lasso, optimizes the balance between Ridge’s pure coefficient reduction and Lasso's selection ability, giving it the capability to handle both multicollinearity and feature selection. Such techniques exemplify the potential of advanced linear regression.

Applications of Linear Regression in Real-life Scenarios

Linear regression thrives in numerous real-world scenarios due to its versatility. In the economic sector, it's utilized to predict and analyze trends. For instance, economists depend on this approach to determine the impact of interest rates on the stock market, thus helping in informed decision making. Retailers also apply linear regression to estimate sales based on various factors like holidays, advertising spend, and competitors' actions.

Surprisingly, healthcare also leans heavily on linear regression. Medical researchers employ it to predict health outcomes based on dietary habits, genetic predispositions, and lifestyle choices. It's through such analysis that breakthrough medical research findings are made.

Technology is not left behind in harnessing the power of linear regression. The ever-evolving field of machine learning leans on regression for predictive modeling. For example, tech firms utilize it to predict customer behavior, such as foreseeing churn rate or estimating user growth. The insights gleaned from these predictions allow businesses to optimize strategies and stay competitive.

Linear Regression resonates with countless more scenarios, it's impossible to exhaust them all. From predicting property prices to estimating crop yields and even studying climate change patterns, its applications are far-reaching. Its immense capacity to analyze and predict has made it a cornerstone tool in various facets of our world.

Pitfalls to Avoid in Linear Regression

In-depth understanding of linear regression can be undermined if pitfalls are overlooked. One major error to circumvent is overfitting. Overfitting tends to occur when the model is complex and aligns too closely with the training data. As a result, it fails to make sound predictions with new data. To avoid overfitting, keep your model simplistic and periodically validate it with a new data set.

Secondly, the assumption of a linear relationship between variables is not always valid. It is crucial to confirm the correlation before using linear regression. Various testing mechanisms like scatter plots or correlation matrices can help discern the relationship between variables.

Additionally, multicollinearity can skew your linear regression results. This phenomenon emerges when independent variables closely correlate with each other, making it difficult to ascertain each one's individual impact on the dependent variable. Techniques such as VIF (Variance Inflation Factor) testing can identify multicollinearity in your model.

Remember, linear regression is not a one-size-fits-all tool. It’s fundamental to thoroughly assess your data to ensure linear regression is the appropriate model. Don't rush into the glamorous complexity of data analysis without a strong grounding in the fundamentals.

Lastly, regression analysis can be a robust tool when wielded correctly. Avoid these pitfalls, conduct learning checks frequently, and engage in continuous practice; soon, you'll find your way toward becoming a proficient data analyst.

Resources to Further Your Understanding of Linear Regression

For those looking to deepen their knowledge in linear regression, there's an abundance of resources available. These include informative blogs such as 'Towards Data Science' and 'KDNuggets', which offer not only theoretical explanations but also practical guides with real-world examples. They demonstrate how the concepts can be applied to realistic scenarios in a digestible way.

Moreover, comprehensive books like 'An Introduction to Statistical Learning' and 'Applied Linear Statistical Models' can give a detailed understanding, offering robust explanations on the subject. Online courses on platforms like 'Coursera' and 'edX' offer structured learning approaches, often with expert-led videos. Joining relevant forums like 'Cross Validated’ on Stack Exchange can offer peer support and allow you to bounce ideas off other learners in the field.

Conclusion: Embrace Linear Regression in Data Analysis

Linear regression, with its simplicity and flexibility, is a formidable tool in data analysis. Its unique ability to decode relationships between variables and make accurate predictions makes it a cornerstone in many professional fields. Implementing it is a streamlined process; from data collection to model evaluation.

However, true mastery lies in deciphering results and improving the model. Understanding coefficients, residuals, and the R-squared statistic is crucial. As is recognizing and addressing common issues such as multicollinearity and heteroscedasticity. Further improvement can be leveraged by utilizing advanced techniques like Ridge, Lasso, and ElasticNet.

Beyond the mathematical, variables play an important role. A clear understanding of dependent and independent variables, as well as interaction effects and polynomial terms, is fundamental. Yet, it's important not to get lost in the data – pitfalls like overfitting and assuming linear relationships should be conscientiously avoided.

The applications of linear regression extend far and wide, touching various facets of life. From economics to healthcare, technology to the social sciences, linear regression anchors reliable predictions and strategic decisions. This ubiquitous utility ultimately underlines its indispensable role in our society.

Finally, continuous learning and application in different scenarios is key to mastering linear regression. The wealth of resources available – blogs, forums, online courses, and books – can only enhance understanding and application.

The journey to understanding and harnessing the power of linear regression is challenging, but rewarding. Embrace it, grasp its potential, and use it as a beacon in the exploration of data analysis.